KING19

-

Posts

385 -

Joined

-

Last visited

-

Days Won

1

Content Type

Profiles

Forums

Events

Posts posted by KING19

-

-

On 2/18/2023 at 7:31 PM, Papusan said:

That's a steal? I don't think so. Even $2850 is all too much. All 4090 mobile is castrated in one or another way. But why pay +40% premium for slightly less crippled performance? You could buy a decent desktop pc for the money in between those options(1300$+). Or just save the money/lower your credit card debt. The best would still be to stay far away from the laptop models. None of these models is meant for 4K gaming anyway. The most crippled 4090 model should manage 1440P without much drawback vs the less crippled for +4000$. And same for 1080P gaming.

See also Eluktronics Mech-16 GP and Mech-17 GP2 are the first GeForce RTX 4090 laptops to retail for under US$3000

Max-P 175W. They should instead use Max-Q 175W or just brand it 4090 mobile. The best would be 4080 Max-Q (+150W) because the laptop card don't perform as a true 4080 desktop card.

Its still a steal for a laptop that is normally over $4000 for its specs and i agree with you. If i was gonna spend that much money for a PC it would be a desktop by far and it'll be a much better investment. 4K gaming is pointless on a laptop anyways as there not much to gain from it due to the small screen size.

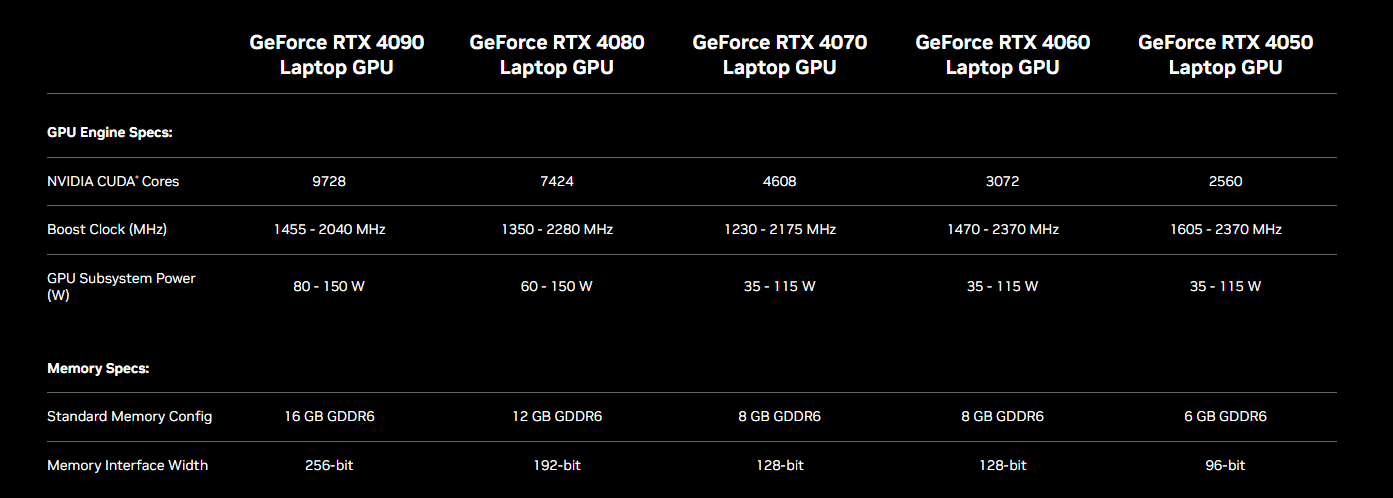

NVIDIA hasnt learned anything from the 30 series and its worse with the 40 series and more confusing than ever because each of the 40 series mobile GPUs has a bigger wattage gap than the 30 Series. Consumers purchase a RTX 4090 laptop but it turns out to be a lower wattage version that is weaker than a Max-P RTX 4080 mobile... Either calling them Mobile or laptop GPU would help somewhat so noone will compare them with the Desktop versions.

23 hours ago, Papusan said:

23 hours ago, Papusan said:And it gets better… What a massive upgrade over previous gen xx70 mobile. Why waste money on this?

Mechrevo claims GeForce RTX 4070 Laptop GPU is only 11% to 15% faster than RTX 3070

Im not surprised look at the spec sheet above. Lesser CUDA cores than their predecessors and on a 128-bit bus.. DLSS 3 and frame generation will be the selling points.

-

2

2

-

1

1

-

-

19 hours ago, Mr. Fox said:

Good and bad are determined based on what supports or calls attention to the stupidity of their agenda, not what is actually good or bad. Common sense and an elemental, animalistic level of decency gets in the way of their agenda.

Yeah we're living the real life version of The Onion starring our government. 😁

Remember to trust the science 😎

-

1

1

-

1

1

-

-

16 hours ago, Papusan said:

You can get a 4090 laptop below $3000 but why should you? You pay premium for the cheaper 4080 silicon thats on top power castrated.

The good news is that you can grab an RTX 4090 laptop for for $2,851

That's a steal there instead of spending over $4000 for the big brands for the same specs. But It doesnt mention the wattage of the RTX4090 though even on their website which is a turn off:

https://www.cyberpowerpc.com/system/Tracer-VII-Edge-I17E-LC-500

Currently its sold out

The biggest sellers will be the mid range gaming laptops (RTX 4070, RTX 4060, and RTX 4050) which are releasing sometime this week and things will get interesting.

-

2

2

-

-

Overclocked Nvidia mobile RTX 4090 GPU can beat even a desktop RTX 3090 Ti

But it is worth it to spend over $4000 for a RTX 4090 laptop to barely beat a desktop RTX 3090ti? The cooling plays a major part in order to achieve it.

Imagine it wasnt gimped to 175W then things would be interesting.

-

-

3

3

-

1

1

-

-

14 hours ago, Papusan said:

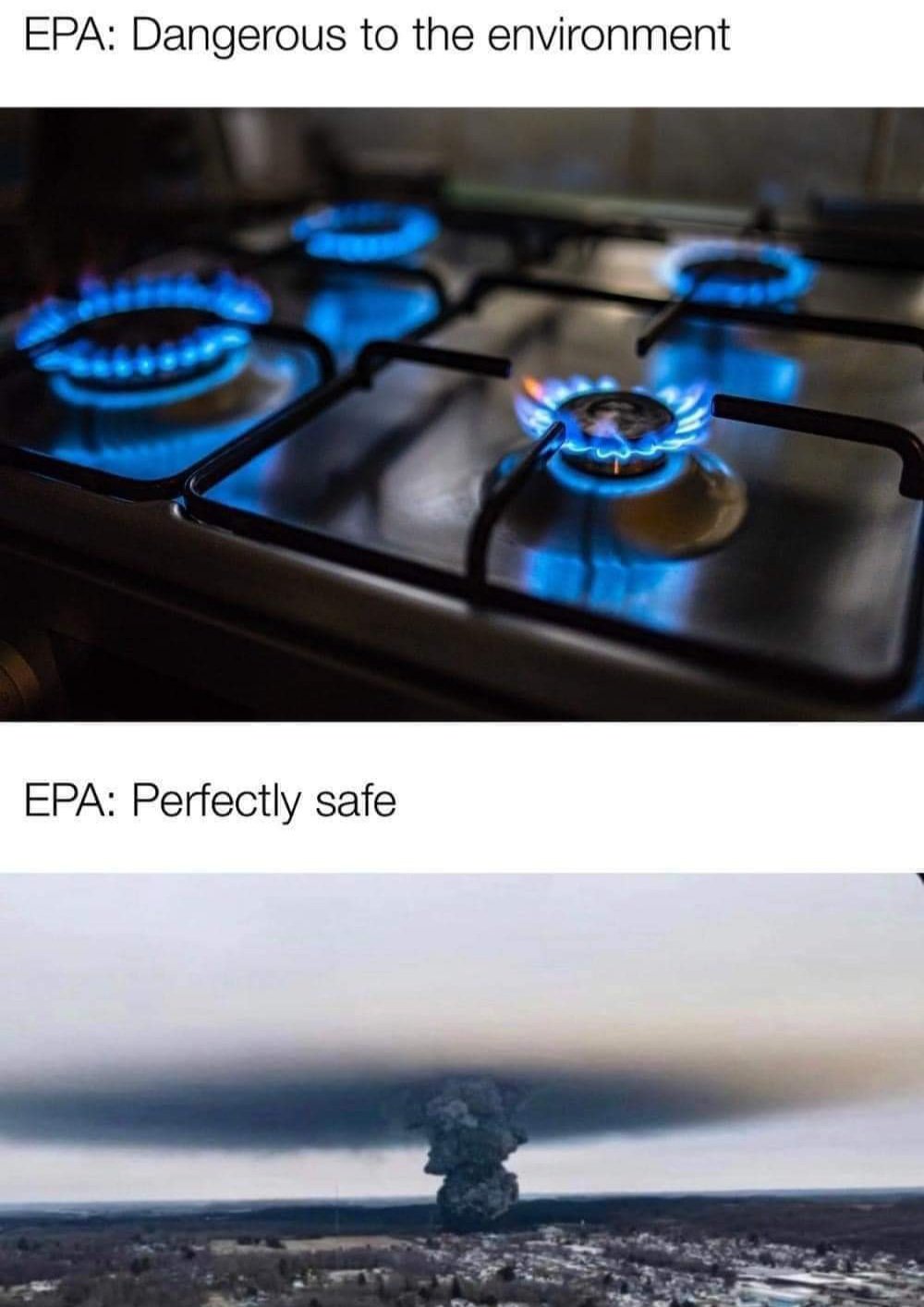

Nvidia prefer that you buy a new laptops at least every second year. And 12GB vram is cheaper than 16GB, so.... Why not?😀 And from what I know the 4060 come with 8GB vram. Gaming laptops with 4060 is probably ok for e-mail, YouTube and internet😎

Only from 16 GB you are really carefree...

They probably got tired of people of not buying new laptops every 2 years ever so they're trying to go back to the pre-pascal days 😁

Hogwarts Legacy is just one game and the graphics isnt anything special to use a lot of VRAM with its textures so i dont get why its using so much VRAM for no reason, another bad console port but not as bad as Forbroken (Forspoken).

-

19 hours ago, DarginMahkum said:

You simply don't get it, do you. The laptop does not support what the CPU or chipset support, it supports what the full functional unit specification "CAN" support, which is from the CPU to the SO-DIMM slot. Although the CPU can support 128 GB, if the SO-DIMM specification does not support it due to for example possible interference between very narrow pins, the laptop will not support it, that is it.262 pins, not 268. Pin count of SO-DIMM module, not the DDR5 chips.

I did not ask any questions. Read the post carefully.

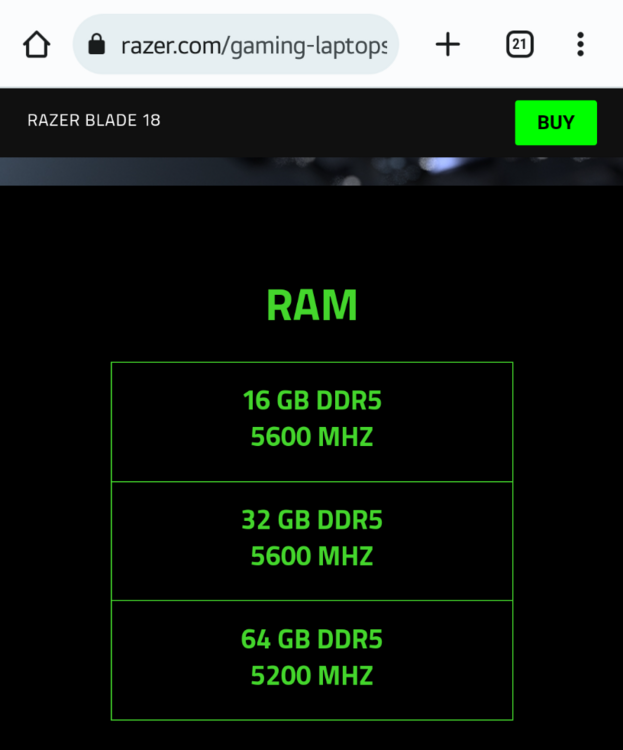

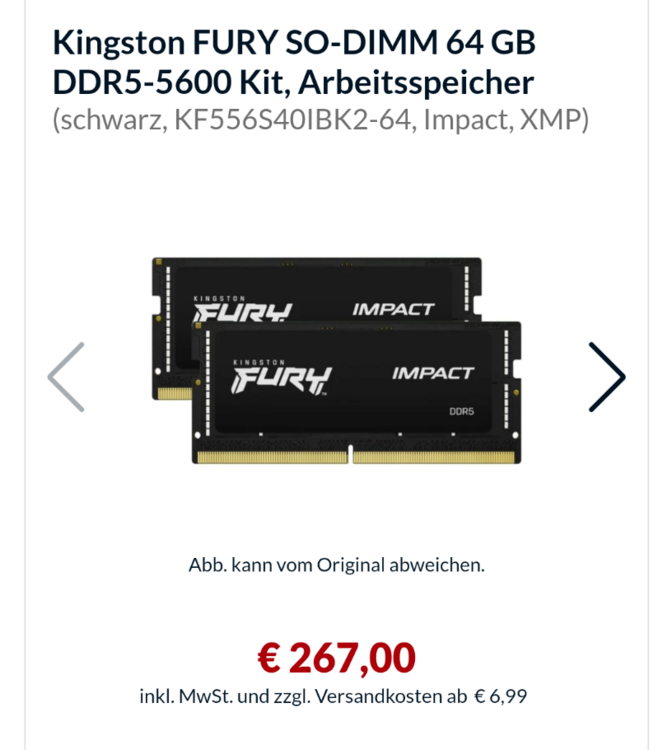

Yes it does..... Im done repeating myself because you wont listen.. It still doesnt change the fact of what i said so for now the laptop will support 64GBs of RAM maximum total because of that reason. Whenever 64GBs DDR5 SO-DIMM's RAM sticks appears in the marketplace one day (years from now i think) because 64GBs is still a lot of RAM for a laptop. Also it will use the same amount of pins no matter what the capacity and it wont be any interference like you're saying. Incase if it does use less or more pins it wont be compatible with the laptop for obvious reasons

Also i made a mistake and you're right that DDR5-SODIMM Laptop RAM only uses 262 pins. Since DDR5 SO-DIMM Laptop RAM uses 262 pins it'll be compatible with most laptops including Razer that you mentioned 4800MHZ, 5200MHZ, 5600MHZ uses the same amount of pins.

"Anyway, back to the topic: It is just weird that Lenovo does not mention the possible memory configurations (including 64GB) properly, whereas Razer does."

^I dont know how to split quotes on this forum but anyways even you didnt asked a question it still doesnt change my reply at all.

Anyways lets move on and get back on topic, I dont want us to keep going back and forth in this thread over nothing.

-

3

3

-

-

20 hours ago, DarginMahkum said:

If you instead of repeating the same thing over and over again would read my posts properly, you would see that I already mentioned that post in my second post.

I don't know the specifications of the chipset, what timings are supported, how the bus lines are allocated to a slot, what the specifications of SODIMM are. And as you can see from the Razer spec, there's a limitation of the timings etc., so it is not just providing the pins, provide enough DRAM chips and it will just work. There are other limitations at play for specifying what a single slot can support. I didn't study the standards and the memory controller, so as someone developing software (and hardware) for many years, I would be careful not to use definitive statements about what is possible before making sure per standards it is possible to have 64GB on single slot properly functioning (also don't forget that larger modules used for servers have different standards like LRDIMM compared to what consumer desktops and laptops use).

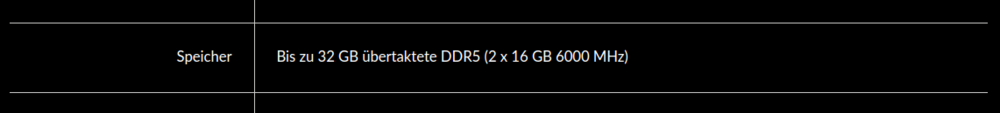

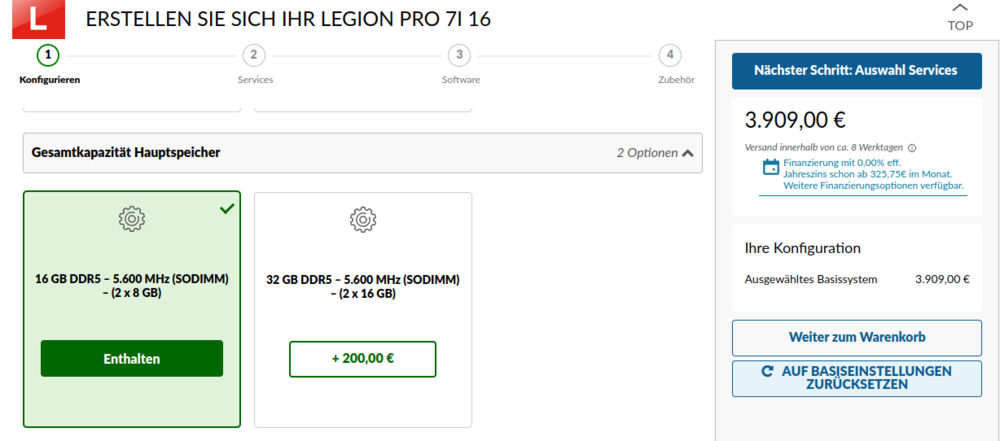

Anyway, back to the topic: It is just weird that Lenovo does not mention the possible memory configurations (including 64GB) properly, whereas Razer does.

Im repeating myself because you're not listening especially my last post well but i also dont wanna go back and forth with you about it:

"Again the laptop supports whatever the CPU/Chipset supports which 128GBs but since there are no 64GBs DDR5 ram sticks out there as of yet so the maximum RAM capacity possible is still 64GBs."

According to the specs sheet of the Legion 7 Pro it can use either 4800MHZ and 5600MHZ and It'll use the same SODIMM 268 pin count as other DDR5 chips so matter what the capacity.

To answer your question to stay on topic i have no idea why Lenovo not mention the possible memory configurations. Like i said in my last post and it was same in previous generations so they go by the maximum memory they offered.

-

1

1

-

-

On 2/10/2023 at 4:28 PM, Papusan said:

Its not a surprise. We all already knew that as long desktop GPUs keep getting more power hungry every gen while laptop GPUs stuck at 175W and have less CUDA cores.

It was fun while it lasted when the 10, 16 and 20 laptop GPUs series was very close in performance to the desktop versions and correct me if im wrong but didnt those GPUs used the same exact chip for both desktops and laptops? because it explains why both versions was very close in performance

-

2

2

-

-

20 hours ago, DarginMahkum said:

I don't know why I am having this ping pong with you. "Can" support is one thing, that the system "supports" (e.g. 2 slots 8nstead of four) it is another thing. Last generation is one thing, this generation is another thing. I earn a living doing DSP and embedded systems programming, and have been programming for many years. So it is not that I don't know what CPU support means but I am just referring to the Lenovo specification. That is it. True, I am missing the part about how the recent DRAM controller address bus etc. are and can be configured, but it is still weird that they specify a max 32 GBs in their specs. And yes, there is only one 32GB stick @ 5600 MHz I could find but it is not that it is impossible to buy or super expensive.

Anyway, my last post on this topic especially as I am not considering buying this laptop.

Again the laptop supports whatever the CPU/Chipset supports which 128GBs but since there are no 64GBs DDR5 ram sticks out there as of yet so the maximum RAM capacity possible is still 64GBs. Also read below

On 2/9/2023 at 9:50 PM, IamTechknow said:Be sure to check the spec sheet at the bottom of the page:

https://psref.lenovo.com/syspool/Sys/PDF/Legion/Legion_Pro_7_16IRX8H/Legion_Pro_7_16IRX8H_Spec.pdf

There's a note or disclaimer that "The max memory is based on the test results with current Lenovo® memory offerings. The system may support more memory as the technology develops." Otherwise the max memory says "Up to 32GB DDR5-5600 offering". I like to believe this is per module, not sure why those two words aren't there but I'm sure Jarrod can clarify that.

Offering*

Lenovo and other OEM's rarely offered more than 32GBs of RAM configurations on their websites and it was same in previous generations, hell in Gen 5 and Gen 6 they didnt offered more than 16GBs RAM configurations but the laptops can be upgraded to 64GBs of RAM. Also even they did they would overcharge customers for it anyways especially DDR5-5600 ones.

-

1

1

-

-

9 hours ago, DarginMahkum said:

Again, it has nothing to do with what the processor can support. It is what is given in technical specification from Lenovo. I am also not sure if it is per slot thing, as I have never seen a per slot specification without giving the number of slots before.

Maybe there are no 32GB 5600 SODIMM modules? Maybe even if there's, there's a problem with this in 2x32 configuration? I am not sure, but the post from IamTechknow kind if confirms this.

The laptop itself can support whatever the processor supports and also previous gens of Lenovo Legions can support up to 64GBs of RAM because of what Intel and AMD processors supported.

I just said that its very tough to get your hands on one, not only the kit but the stick itself, However 32GB DDR5 4800mhz ram sticks are way more common.

6 hours ago, saturnotaku said:There are other rather notable downgrades to the Pro 7 versus last year's models. Some of them I knew, others were found by digging through the PSREF (PSREF Legion Legion Pro 7 16IRX8H (lenovo.com)). They are:

- In addition to the Ethernet port being flipped the wrong way (release prong down) like it was on the 2021 Legion line, it's now 1 Gbps instead 2.5.

- The rear lights that indicate which port is where are gone.

- Here's the big one: The trackpad is now plastic instead of glass. This would also imply that the top deck is plastic like the Legion 5/5 Pro.

I'm very disappointed in the last change and am now seriously considering cancelling my order.

Edit: Jarrod discusses these things and more in his video.

Just as we thought, its a massive downgrade. If they wanted to save money so badly they could of just kept the 2022 Legion 7 design without changing anything. The good news is that they finally added fan control without being forced of using a 3rd party app for it.

-

1

1

-

1 hour ago, DarginMahkum said:

It is not about upgrading yourself, the spec says max 32GB - meaning 64GB is not supported. Maybe something to do with the overclocked configuration, I am not sure.

Its supported because the i9-13900HX can support up to 128GB of RAM

Problem is its very tough to get your hands on a 64GB DDR5 5600Mhz RAM kit though.

-

7 minutes ago, jlp0209 said:

I am surprised too, usually the Legion 7 appears in spring or even summer. I am torn about the 4080 vs 4090, not sure how much I'd benefit from 4090.

I heard on reddit that Jarrod is releasing a review of it tommorrow(Friday). Same exact specs

-

12 hours ago, DarginMahkum said:

It always been that way. If you want upgrade the RAM to 64GBs it best to buy it from newegg,amazon and other places and do it yourself because OEMs always overcharge for it

1 hour ago, jlp0209 said:Fyi, Legion Pro 7i w/ i9 and 4080 is live on Lenovo's site. I ordered using a 5% off code and 6% cash back through Rakeuten. Delivers end of Feb so I have time to cancel if initial reviews don't look good.

Legion Pro 7i Gen 8 (16″ Intel) | AI-tuned Gaming Laptop | Lenovo US

Wow its a shock that a new Legions are available this soon lol. Looking forward to the review!.

-

12 hours ago, kojack said:

It's the same broken record every time a new version of windows is released. The only time it was warranted was me edition. Every other version was awesome. VISTA and 11 being my two favourites.

Its the same broken record that still remains true today and its the same reason why companies wait years to switch to a new OS. Win 11 is still buggy and many people that rush to upgrade to it end up breaking their PCs in the end. In fact it didnt add anything new as of yet over Win 10. Oh yeah older computers cant upgrade Win11 because of TPM 2.0 but there are workarounds but its pretty difficult for the average user. Another bad move from Microsoft...

-

1

1

-

1

1

-

-

16 hours ago, 1610ftw said:

It gives an idea what could be accomplished if notebooks really had a proper 4090 chip and 24GB memory. Performance would probably be about on par with a 4080 desktop card and then give or take a few percentage points depending on TGP.

Yeah agreed plus using GDDR6X and having a 384bit Bus but thats not going happen. Laptops GPUs will be stuck at 175W unless something changes.

9 hours ago, Shark00n said:4080 is barely faster than 3080Ti, which was already barely faster than 3080.

How is frame generation synonimous with 'great performance'? They're fake frames. I'd say that tech is nice when you have less than 60FPS, still wouldn't use it, and pretty pointless over 60FPS.

A 15FPS to 35FPS increase in games isnt a small increase whatsoever especially on 1440p. Also the tests was done without DLSS/FG on as well. According to the data in Jarrod's video the RTX 4080 has a 28% average above the RTX 3080ti. That still a major performance jump.

-

15 hours ago, saturnotaku said:

Reviews are starting to come out. HUB tests the 4090 while Jarrod's Tech compares the 4080 vs the 3080 Ti.

That is a pretty big jump over the RTX 3080ti and even the RTX 4080 is faster than the RTX 3080ti. Add DLSS 3 aka Frame generation to that you're going to have great performance. Too bad the laptop versions gets destroyed by the desktop versions and it doesnt justify the crazy prices for them.... Still waiting on the RTX 4070 and RTX 4060 laptops to hit the market

-

On 2/4/2023 at 10:59 AM, JeanLegi said:

Apex on the CPU should be no problem the contact pressure is good enough for this with the GE76 Raider.

For the GPU was it not enough so i used TFX on the GPU.

But since Honeywell I don't wanna use somehting else 😄

Once you go Honeywell you aint going back 😎.

-

1

1

-

1

1

-

-

On 2/3/2023 at 8:20 AM, Etern4l said:

Rejection of flawed or harmful tech doesn't by itself make anyone a Luddite. Delaying Windows "upgrades" for as long as possible is a time-tested quality of Windows experience maximisation strategy.

Exactly.

Upgrading to a new version of Windows that is less than 2 years old is not recommended especially when it still buggy as hell.

According the OS marketshare Win 11 is up to 18% while Win 10 still dominating at 68.7% and Win 7 is at 9.62%

https://gs.statcounter.com/os-version-market-share/windows/desktop/worldwide

Win 11 is going to be a forgettable OS with Win 12 releasing sometime next year.

-

1

1

-

-

4 hours ago, ryan said:

i ran msi afterburner and monitored tgp it was a constant 115w

this is why i am frustrated

https://www.notebookcheck.net/NVIDIA-GeForce-RTX-3060-Mobile-GPU-Benchmarks-and-Specs.497453.0.html

scroll down to timespy and look at top scores .. a 115w 3060 scores 9500 stock

and to passerbys

the reason for all of this is so I can push my system to the llimits but more importantly get over 60fps in crysis remasted while running and shooting ect basically always above 60fps maxed with raytracing set to performance in 1080p....heres an example

screens dont show it but trust me its 63 in game running

You're pretty much at your limit for a 100w RTX 3060, Well 115w because of dynamic boost. The average score on Notebookcheck is the 130w version now 140w because OEMs released a VBIOS update to increase the wattage to 140w. Also i just noticed that Crysis Remastered has DLSS now and you have it turned off. You can achieve your goal if you turn DLSS on.

2 hours ago, Rage Set said:We have lots of below zero weather for all of you...except @Papusan here in Connecticut. Right now 15F (-9.4C), but with the wind chill 3 degrees. It is going negative tonight and most of tomorrow. Perhaps it is a garage overclocking type of night.

Yeah we're getting it too, Been out in it all day at work wearing 4 layers lol.

-

On 1/27/2023 at 3:26 PM, cylix said:

Haha 😆

I was right. The game is just badly optimized...... The graphics doesnt look any better than a typical AAA game, even games like RDR2, Cyberpunk 2077, Assassin's Creed Unity and, etc has better graphics than Forbroken. The Next Crysis? more like the next GTA IV or even Saints Row 2 because those games are still the kings of bad optimization... Even with modern hardware you'll struggle playing them.

I'll try out the demo to see how it runs on my 1660ti laptop, Wanna bet it wont be pretty even with FSR lol 😅

-

1

1

-

-

Update on Honeywell7950SP (Paste):

After almost 8 months it finally degrade as my temps was hitting in the 90c's repeatedly and over 100C at times when playing games like Cyberpunk even with the laptop elevated. My laptop fans was pretty dusty and even cleaning the fans my laptop temps didnt change so i was forced to repaste. After removing my heatsink the paste was almost dry and had a lot of gaps where the my CPU and GPU die was exposed. This time when i repasted i used the spatula that came with the honeywell 7950 package to spread the paste, like i said before its a thick paste almost like putty and covered my CPU and GPU die completely with a thick layer.

Not the cleanest job as it was late at night but very effective especially when you see my temps and cinebench score below:

After running cinebench 3 times not only i got my highest score but my CPU temps never went above 88C and games stayed in the 70Cs range!. Im still not sure how long it takes for it cure but the results took effect very quickly this time!.

-

4

4

-

1

1

-

-

On 1/22/2023 at 2:26 AM, Sandy Bridge said:

I was expecting higher requirements with the title "The Next Crysis". This game can run on an Ivy Bridge Quad-Core processor that was released over a decade ago, soon to be 11 years, and video cards that are equivalent to my RX 480 that I bought in 2016, MSRP $240 for the base 8 GB version. Positively pedestrian requirements!

Now granted that's 720p 30 FPS, but when I tried to run the OG Crysis on my 8600M GT laptop, which was the 4th-most powerful laptop GPU available when I bought it mere months before Crysis's release (behind the 8700M GT, 7950 GTX, and just barely behind the 7900 GS; the 8800M series had not yet launched), I got 22 FPS at, IIRC, 800x600. That was stretching the bounds of playable! Now I have a laptop that, for half the price of my 2007 Crysis-playing laptop, with no adjustments for inflation, is just about at the "Recommended specs", and should easily hit 1080p 30 FPS given the differences.

Point being that a lot more systems, as a percentage of all gaming rigs, are going to be able to play this game without upgrades than were able to play Crysis without upgrades when it came out.

Really the minimum requirements aren't much more than Crysis Remastered's, either, especially considering that game came out over two years ago:

Crysis Remastered

OS: Windows 10 64-bit

Processor: Intel Core i5-3450 / AMD Ryzen 3

Memory: 8GB

Storage: 20GB

Direct X: DX11

GPU: Nvidia GeForce GTX 1050 Ti / AMD Radeon 470

GPU memory: 4GB in 1080pMore VRAM and RAM and storage, but the processor is still a quad-core Ivy, and the GPU is only half a tier up, seems like a modest jump overall for two years aside from the GTA-like storage space requirements.

My take is their marketing department did a good job of predicting what would go viral by releasing super-high-end "Ultra" specs when most games only have "Minimum" and "Recommended", and the Internet isn't realizing that while not low-end, aside perhaps from storage this game's requirements really aren't that outrageous.

At least Crysis have some excuses like being a PC exclusive and it was ahead of its time graphics wise so much so that consoles like the PS3 and XBOX360 wasnt able to handle the game until years later where they had to downgrade the game's graphics somewhat and it still stutters. Even a desktop GTX 8800 couldnt run it on High and barely getting above 30FPS+, Ultra is worse below 30FPS most of the time. With the remastered verison with the minimum requirements you can run it at 1080p and get 60FPS+ with low settings.

Forspoken doesnt have any excuses. Graphics wise it doesnt look any better than other AAA games especially on the PS5. The recommended specs is just as bad the minimum specs, You need a desktop RTX 3070 to be able to run it on 1440p (QHD) 30FPS.... Mind you the desktop RTX 3070 is more powerful than the current strongest laptop GPU 3080ti. The game will be released on the 24th so we'll find out the truth!!, Im leaning towards the game being badly optimized.

-

3

3

-

-

On 1/21/2023 at 9:01 PM, Mr. Fox said:

I think so. While ray tracing is not necessary for a game to be enjoyable, the visual quality enhancement is nothing short of remarkable. Far more realistic graphics than without it. It doesn't make a fun game more fun or a boring game less boring. I never choose a game because it has it, but the difference it makes in the graphics quality is undeniable. If you have not been exposed a whole lot to it, then it is hard to relate to and easy to dismiss.

That is true but at same time its still a gimmick because only High End GPUs can barely handle it with DLSS/FSR on to get playable FPS but it also introduces more issues like ghosting and shimmering at times. Companies are pushing RT so hard when majority of people dont have the specs to use it property..

-

3

3

-

RTX 4000 mobile series officially released.

in Tech News

Posted

I am a Wizard!.

Talk about castration at the highest level... Also worse performance on QHD+ than the RTX 3070ti because of it being only on a 128bit bus..

Like i said before they're making DLSS 3 and frame generation as the main selling points for the mid range gaming laptops and sadly people are going to fall for it.