-

Posts

3,172 -

Joined

-

Last visited

-

Days Won

172

Content Type

Profiles

Forums

Events

Everything posted by electrosoft

-

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

In regards to the Taichi 7900xtx, as long as it is retail and not scalpy prices, if you want it get it. 🙂 With my 4090, I knew the only two models I wanted (Suprim X Liquid or FE in that order) and just waited till it was back to retail and picked it up. I don't regret it at all (Like I did the KPE 3090ti a smidge). -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

True, I just realized my return window is June 13th, so I can test them a bit more than a few weeks. 🙂 -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

I'm quite surprised you haven't picked one up yet with all that other high powered hardware. 1440p it would definitely challenge your 13900k and then some even at 6ghz methinks. LOL, I thought this too. With all the hardware based around everything he's picked up, I'm surprised one of the things he hasn't picked up is a 4090. Blocked and chilled, It would really outclass his 3090. -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

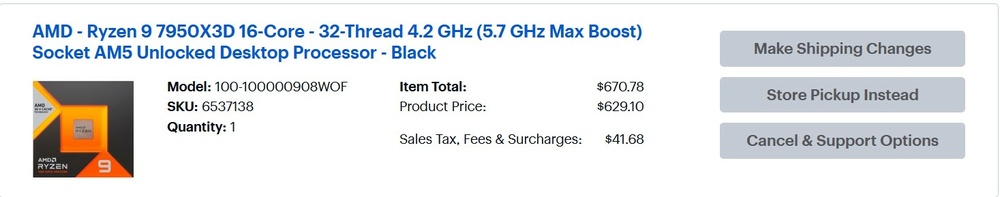

Decided to snag a 7950X3D from Best Buy too and compare them extensively over the next few weeks to see which one I want to keep. I plan on testing max fclk/PBO on the 7800X3D and run all the normal benchmarks and fun times and gaming runs (WoW) then swap in the 7950X3D rinse and repeat. First I'll make sure everything is up to date and lock down a static flight path and re-tweak/verify and run my 12900k 5.2 all core through the same paces for comparative data. -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

** Beast mode activated ** Looking forward to some serious IMC taxing. 🙂 -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

MSI board from Amazon warehouse "Open Box" arrived in a brown box with no original box or manuals but the motherboard itself was brand new and all accessories were in there too factory sealed. According to MSI, production date was March with Jan Bios already installed. 7800X3D booted right up and just updated from there no blind flash needed. M-die sticks are the pair I've had since Aug 2022. They were heatsinked but I didn't realize they also had A-RGB leads on them so I plugged them into an extra hub I had and viola! 7800X3D installed in my test case up and alive with the M-die sticks: Initial run with memory at 6000 (before the next week of tweeking and dialing in the CPU and mem): -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

I would definitely wait. XTX prices are only going to continue to head down a touch. Best Buy has 13900k's for $569 on sale available. They've been there for awhile. They opted to not carry the 13900KS this time around (boo!). Amazon too at $569 There is no way Amazon and Newegg (and even Walmart) aren't going to have their cake and eat it too. The idea of selling out of their own product to re-sellers and profit then turn around and have them offer them jacked up to get their marketplace cut is easy money on the table. If they didn't offer a Marketplace those re-sellers would just run to eBay/StockX/etc... and sell them there. It comes down to the consumer to exercise fiscal restraint (we say behind our 4090s lol) when gouging and scalping are clearly taking place. I've never bought from a scalper or gouger and I never will. I will wait or not buy. I don't need anything that badly. -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

Asrock Taichi 7900xtx is my favorite of all the 7900xtx cards followed closely by the Nitro+ VC. Taichi has a superior PCB design (again) like their 6900xt/6950xt Formula OC. If I pick up another 7900xtx, it is definitely going to be a Taichi 7900xtx. With the way prices are falling a bit, I'd wait on the 7900xtx Taichi for at least a +X off coupon from Newegg or a flat out reduction. -

Nice work and nice video bro!

-

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

Armory Crate is devolved into a malware mess that Asus has purposefully went out of their way to make it a requirement. When a company issues BIOS updates that disable/remove the motherboard retaining the settings between power downs thus forcing you to run their software to re-enable them, that is malicious and targeted. Before the 2000+ series BIOS updates for the D4, the motherboard retained the settings even powered off or disconnected completely via CMOS. After? Now they lose their settings powered off even plugged in and CMOS and you need to run Amory Crate to re-enable. Luckily OpenRGB supports the D4 so setting them back is no problem. I removed all that garbage from my Asus Strix G18 (before returning it) and like clockwork it was running better and not stuttering all over the place. Of course on their laptops Asus Crate is even more required for system control..... MSI used to be better too until they decided to abandon Mystic Light and group everything together into their Dragon software and went the way of Asus in requiring a massive install to do even trivial settings. Asrock still gives you some BIOS control over their lighting which I appreciate as it doesn't require their software unless you want to get fancy or need even more extended control. G.Skill still lets you lock their memory RGB and disregard any software. It also has worked with most software suites I've encountered but I prefer non-RGB memory as it can cause heating/power issues especially when overclocking and the heatsinks are usually trash anyhow and need replacing. Crucial RGB is trash. Their memory also required to run their odd software to light up but at least it was a set and forget at boot. EVGA is minimalist in their RGB and as about as non-intrusive as it gets even in their other software for devices. I love the fact they refuse to make an Asus/MSI like "suite" that is bloated and keep each piece of RGB software separate. They also are "set and forget" for all their devices from MB to GPUs (RIP), KB, Mouse, AIO and more. Once it is set, it is set whether it loses power or not. I wish other companies were like this. -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

I contemplated OLED but I didn't want the potential headaches. I routinely leave my displays on the same content for hours and I am a prime candidate for burn in. As fate would have it, I was sent a 43" 4k Samsung mini led display for free to evaluate and keep so that was that. I love it but make no mistake, OLED is superior from viewing angles to per pixel control. Mini LED is miles ahead of side lit displays as there is zero halo or ghosting when not in use or a zone is set to black but OLED trumps blooming everyday. mini led is brighter than OLED though on average. The question is can you be disciplined enough (or care enough) to cycle your content and displays to avoid potential burn in? -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

Jay ranting about motherboard prices (and reflecting how I feel): -

[SOLD] LG 49WQ95C-W 49" 5120x1440 144hz display

electrosoft replied to electrosoft's topic in Computer Components

I can see where you would think that.... 😉 -

[Various] AMD Ryzen 7600x/7700x/7900x/7950x Reviews

electrosoft replied to Reciever's topic in Tech News

SaaS is a plague. I should be able to buy it outright and if...IF I think an upgraded version is worthy I'll buy it but if I'm content with the current version, why am I continuing to pay for it? Nevermind the language basically saying you don't even own the product many times. DRM is a nuisance I'm somewhat ok with until it breaks or slows down my system or forces another purchase. -

[Various] AMD Ryzen 7600x/7700x/7900x/7950x Reviews

electrosoft replied to Reciever's topic in Tech News

7950X3D will be a nice overall bump over your 7900x, congrats! I saw Newegg is offering them now with a $25 discount so pricing is moving in the right direction. My 7800X3D arrived yesterday and my Carbon X670E will be here today. Hopefully I can carve out some time to get it setup sooner than later in my workbench case. I think the 7800X3D will work out for me considering I usually run my 12900k 5.2 all core locked P-Cores only but I'll leave ther 7950X3D as an option. Plan is to ride this till 8000 X3D unless Intel somehow drops the high heat (or the 7800X3D sucks). -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

I can see a compelling argument for either but personally I'd pick a 6950XT: -

As we've progressed through DDR, dual rank isn't as much of a priority as before. It can still provide a little bit of benefit for DDR5 but I would be more concerned with frequency, terts and primaries in that order. All things being equal, I'd get dual rank just because why not if it provides any type of tangible benefit.

-

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

Best Buy has 7800X3D in stock at actual real pricing. I went ahead and ordered one. Playtime ahead. Hopefully this turns out better than my 5800X 4 months of USB torture before I jettisoned it for the 11900k. https://www.bestbuy.com/site/amd-ryzen-7-7800x3d-8-core-16-thread-4-2-ghz-5-0-ghz-max-boost-socket-am5-unlocked-desktop-processor-black/6537139.p?skuId=6537139 -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

Agreed it isn't absolute or written in stone in regards to establishing equilibrium between VRAM exhaustion and GPU processing limitations but let's say generally as you lower settings to fit your VRAM you will also lessen the load on your GPU. And I agree the 4070 Ti definitely should have come with 16GB and the 4080 should have been equipped with 20GB. -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

LOL, is that your actual motherboard being boxed up for shipping? With the newest, freshest revision I'm expecting some serious results. 🙂 This is so true. I am beyond sensitive to keyboard and mouse devices (I've lost count how many I've tried over the years). It extends to graphical settings, dead pixels, display bleed, system noise and more. Others don't even notice it but they can drive me batty. Any type of subpar fps and the dreaded "chunk" or too much latency and it will be fixed one way or the other. I just jettisoned T-Mobile Internet to go back to Xfinity because of the latency. Sometimes ignorance is bliss (In my Cypher voice). -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

I noticed his last update from a few months ago on YT community was he had been sick for over 3 weeks with the flu/bronchitis. Hopefully Max is doing alright. I always enjoyed his content. As for the 4070, basically you're getting a 3080 w/ 2GB more memory for $100 less and all the 4000 series refinements (DLSS3, RT bump, etc..). It is a worthy card for someone looking for an upgrade. 4070 and 4090 are the only two worthy pickups. 4080 and 4070ti is an attempt by Nvidia to shift the pricing stack upward well outside of inflation. The 4080 bump is just so insulting.... -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

While I am a staunch proponent of more VRAM the merrier, I do get where UFD is coming from. The argument being you're not going to be running that high of resolution/settings anyhow on a lesser card (or if you are, it really isn't the card for you). There is a direct relation between graphics/resolution settings and GPU processing power along with VRAM. No one wants pretty eye candy chunking along at 20fps so you would naturally lower the settings and/or resolution anyhow. But with that being said, yeah, Nvidia needs to be more like AMD and slap more memory on their mid to mid-high tier cards -

*Official Benchmark Thread* - Post it here or it didn't happen :D

electrosoft replied to Mr. Fox's topic in Desktop Hardware

Ended up picking up an MSI X670E Carbon open box for ~$320 from Amazon for some X3D playtime. Still have my M-die heatsinked sticks ready to go. Just need to lock down on a 7950X3D or 7800X3D. I'll end up setting up and testing it in my "workbench" case (Ye ole Corsair 540) as always. My main desktop has a stack of mods and adjustments it needs in the corner anyhow so I'll knock out like 5 birds with one stone. I'm real curious to see how it performs against my tuned 12900k for WoW and then FO76. I'm still bound by my 12900k even at 4k especially in Raids and Valdrakken where fps can dip down into the 70s while my 12900k is bouncing off of 100% and my 4090 is sitting around 70-80%. I expected this as more player data hits and taxes the CPU which was already bottlenecking my GPU in open areas with nobody around. Hardware Numb3rs has been inactive for quite some time and that was my usual go to spot for the type of tweaking and WoW tests I liked. Yet again a major itch I need to scratch personally.... 🙂 On one hand I lamented about this years ago. On the other hand, using recommended settings, my daughter's PC handled 4k Hogwarts just fine and looked pretty decent on her Asus 3070 KO. This was with 32GB DDR4 and a 12400 on an Asus B660. I'm much more sensitive to graphical settings though. Literally how our convo went when she was playing: Me: "Don't you see there? See where the textures aren't as clear?" Her: "No" Me: "Well what about the grass there? Don't you see how the blades aren't as clear?" Her: "No!" Me: "Look at the facial textures. Don't you see how they could be a little clearer?" Her: "No dad! It looks good to me" Me: "Well what about....." Her: "For the love of God dad, LET ME PLAY!" 🤣 -

[Various] AMD Ryzen 7600x/7700x/7900x/7950x Reviews

electrosoft replied to Reciever's topic in Tech News

I've been looking over Lasso and assignments and the easiest way still seems to be just to spend a minute disabling the non 3D CCD for gaming and enabling it for everything else but I'll cross that use scenario down the road. I ended up going with an MSI X670E Carbon that I found open box from Amazon for ~$320. I was about to pull the trigger on the 3rd party open box for $365 when I refreshed and it popped up so I grabbed it. I have some M-die DDR5 heatsinked 2x16GB sitting on the shelf that can hit 6600. It's been on the shelf for over 6 months waiting for a home. Just need to either pony up for a 7950X3D or wait for a 7800X3D and save $250. -

[Various] AMD Ryzen 7600x/7700x/7900x/7950x Reviews

electrosoft replied to Reciever's topic in Tech News

7800X3D is a no brainer for an easy , drop in massive boost to gaming like the 5800X3D is for AM4. Depending on your use case, 7950X3D makes perfect sense. I said it elsewhere, but for myself I would just disable the second CCD if it is acting inappropriately with my given games and just re-enable it for normal D2D use but if gaming is your focus, 7800X3D is the go to chip. As for mindfactory, like any new product, let's see where it settles in after the initial launch rush but the 7800X3D really does check all the boxes for a gamer focused CPU. I'm leaning towards the Asrock X670E lightening PG for a potential X3D buildout. It seems to check all my boxes including a killer price for a full X670E chipset. Coincidentally, Asrock also makes the beefiest/best PCB 7900xtx (Taichi) a repeat of their 6900xt/6950xt best PCB design with the Formula OC.